A Generative AI checklist for corporate leaders

Lately, LinkedIn (where I hang out a lot for some ungodly reason) has been so saturated with AI Governance professionals talking about “responsible AI” and how to make sure your corporate AI rollout doesn’t conflict with HIPAA compliance or PII protection requirements, that one might almost forget that leading AI ethicists were all fired, or have quit in protest, and that the entire field of AI ethics is now only a shadow of what it once was.

I’m not suggesting there’s anything wrong with AI Governance. I have friends in the field and they do important work. But AI Governance has no formal definition, so I sometimes encounter governance professionals who seem to play the role of corporate enabler rather than being stalwarts for ethical and social alignment. Since there is no formal definition of the role, it’s hard for me or anyone to judge how a governance expert conducts themselves, but I think I can safely say this: governance in the absence of ethics is a real problem.

Not only have the ethicists all been fired, but AI ethics has become a conversation non-starter in most companies. The rise of the “AI-first” CEO mandate comes at a time when corporate America is undergoing a widespread shift towards authoritarian leadership and workplace surveillance, which I see as a natural side effect of leaders becoming more and more isolated from their staff as they spend more time conversing with digital yes-men.

My understanding is that AI governance is supposed to be a way of translating AI ethics into operational practice. And so far, it seems like governance, unlike ethics, is still a viable career that hasn’t been put on the butcher’s block (yet). Of course, I know that compliance and security procedures are a big part of what the governance role seeks to address. But if no ethics due diligence has been conducted, governance frameworks are devoid of moral principles to follow, and there’s a danger that responsibility and accountability is replaced with mere lip service to serious concerns, and looking the other way when the going gets tough. CEOs will hire an AI governance expert to make sure they’re not breaking any laws or taking any unnecessary financial risks, but I doubt many leaders give a shit about any of the actual social, cultural, psychological, or environmental risks, and I doubt they’re usually captured by the boxes they’re encouraged to check.

And that’s the idea behind my somewhat scathing, somewhat facetious checklist below. My hope is that some CEOs see it and squirm a little when they read it. Because what else can I do? I’ve tried offering them training courses in how to approach AI critically and ethically, but most of them ignored me. My message to them is: you need ethics, and you need to stop making decisions based on AI hype and FOMO. You can’t just ignore all of the serious issues presented by this technology. If you think you can hide from it or look away you’re going to regret it down the road. A lot of CEOs in 2026 are going to have to deal with brand fallout and a client or customer exodus when they suffer a data breach, hallucination scandal, employee morale collapse, or crippling financial costs from AI dependency overload.

I also want to challenge governance experts. Are you really raising the most critical ethical concerns with your clients and not just staying in the safe zone of compliance and security audits? Can you even do so without having your contract cancelled? I don’t know. But I feel like you’re the last line of defence against an onslaught of authoritarian AI and the end of all empathy and reason, now that ethicists have left the building.

In reality, generative AI is hardly ready for primetime, and should never have been unleashed on an unsuspecting public the way it was. There are fundamental flaws, huge legal and ethical hurdles, and operational kinks that should have been ironed out in academia and private testing before being commercialized. As things now stand, it would be extremely difficult for any governance expert to design a framework for truly ethical adoption of AI: the resulting guidelines would be so restrictive and expensive that it would be unpalatable to most business leaders. My hunch is that many governance professionals know this but choose to see things as a glass half full and have decided to do what they can to point clients in the right direction, even if they know the whole AI industry is premised on exaggeration, distraction, and lies.

Let me know what you think of the list (and the cited research) below.

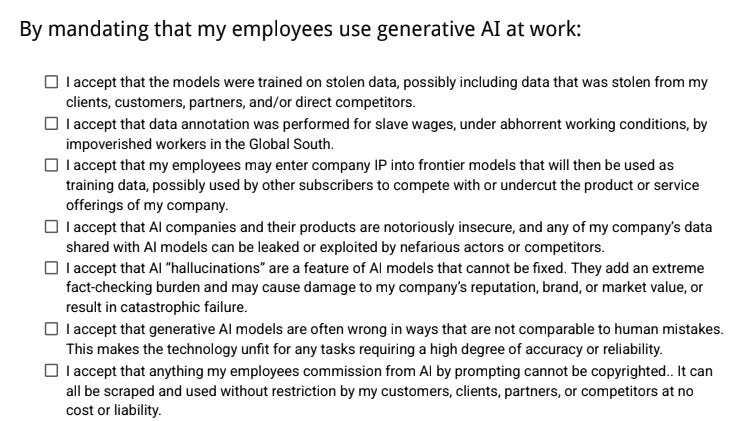

By mandating that my employees use generative AI at work:

☐ I accept that the models were trained on stolen data, possibly including data that was stolen from my clients, customers, partners, and/or direct competitors.

☐ I accept that data annotation was performed for slave wages, under abhorrent working conditions, by impoverished workers in the Global South.

☐ I accept that my employees may enter company IP into frontier models that will then be used as training data, possibly reappearing later as content for other subscribers that competes with or undercuts the product or service offerings of my company, even if there are strict company policies or guidelines to prevent such outcomes.

☐ I accept that AI companies and their products are notoriously insecure, and any of my company’s data shared with AI models can be leaked or exploited by nefarious actors or competitors, including sensitive financial data and information about company employees, executives, clients, customers, and partners.

☐ I accept that AI “hallucinations” are a feature of AI models that cannot be fixed. They add an extreme fact-checking burden and may cause damage to my company’s reputation, brand, or market value, or result in catastrophic failure in systems where financial costs will be incurred.

☐ I accept that generative AI models are often wrong in ways that are not comparable to human mistakes. Including AI search engines. This makes the technology unfit for any tasks requiring a high degree of accuracy or reliability.

☐ I accept that anything my employees commission from AI by prompting cannot be copyrighted, including software code, images, marketing copy, brand designs, and business materials. It can all be scraped and used without restriction by any of my customers, clients, partners, or competitors at no cost or liability.

☐ I accept that AI models drift to the average distribution of their training data, meaning that anyone using similar prompts, even across different models, will invariably see very similar outputs. Use of generative AI will not differentiate my company, it will do the opposite. I will also be promoting algorithmic bias.

☐ I accept that AI models are heavily subsidized and that all leading AI companies are currently running at extreme losses in order to capture market share. At some point this growth-at-all-costs phase will need to become a path to profitability. Therefore I accept that my AI costs could rise 10x or as much as 25x at some point in the near future. I accept that this could end up being more expensive and less fruitful than just hiring more humans.

☐ I accept that my employees will see an AI-first mandate as a temporary arrangement where they will be systematically training their own replacements, and that this will destroy company morale.

☐ I accept the evidence that use of generative AI at work increases, rather than reduces, stress and workload on employees, even managers, often leading to depression, disengagement and burnout.

☐ I accept that there is so far no evidence that use of AI models increases productivity with any statistical significance, or that anecdotal individual productivity converts into measurable, company-wide gains. An AI mandate will likely slow my company down and add more friction to many processes.

☐ I accept that I am participating in a shift to workplace automation that will exclude future generations from entering the workforce. Replacing entry level positions with AI will short circuit my company’s future.

☐ I accept the conclusions of multiple studies that show the majority of generative AI initiatives in the corporate environment fail and produce no measurable ROI.

☐ I accept that public sentiment is overwhelmingly unfavorable to corporations using generative AI and that following an “AI-first” strategy may do irreversible harm to my company’s brand, reputation, or market value, while opting out of generative AI might be the smarter choice.

☐ I accept that use of generative AI at work leads to breakdowns in communication, reduced quality of work, and reduced civility among employees, including aggressive competitive behaviors triggered by fear of layoffs, and even workplace sabotage.

☐ I accept the evidence that suggests outsourcing human thinking and creativity to algorithms can result in cognitive atrophy and the loss of skills, so by forcing my employees to use AI in their daily work I will encourage mental decline, erode structural capital and sacrifice institutional knowledge over time.

☐ I accept exposing my workers to the risk of “AI psychosis” already evident in hundreds of thousands of generative AI users with no history of mental illness, leading to depression, mania, and in extreme cases, violence and suicide.

Psst: if you’re on the same page as me, and feel like doing a little peaceful activism at work (don’t take any risk you’re not fully prepared for), here’s an abridged one-page PDF without links that you can print out and tape up in an environment of your choosing.

Fantastic, accurate checklist.

This article shows the workers' side of the issue:

Bosses say AI boosts productivity – workers say they’re drowning in ‘workslop’

Utterly brilliant! I think you've covered everything there Jim. Great job.